Scrape websites using the IMPORTXML function.

Syntax=IMPORTXML(url, xpath_query)url – The website address from which to extract dataxpath_query – This argument specifies the type of data to fetch from the webpage. Eg. “//title” to fetch the title.

Other examples of xpath_query:

“//h1” for H1 headings

“//@href” for all Links on a page

“//meta[@name=’description’]/@content” for Meta descriptions

Sample Usage=IMPORTXML("https://tryamigo.com/","//title")//Retrieves the page title.=IMPORTXML("https://www.bbc.com/news/world-asia-india-62722592", "//@href")

//Fetches all links from the page.

Click here to get a copy of the spreadsheet with examples.

Have you ever wanted to extract data from a website but didn’t know how? If so, then you’re in luck! In this blog post, we will learn how to scrape websites using Google Sheets. We will walk you through the process step-by-step, and show you some of the best practices for scraping data in google sheets. Let’s get started!

- What is web scraping?

- Why scrape websites

- Types of data that can be scraped

- IMPORTXML function

- Syntax of the IMPORTXML function

- How to scrape page titles

- Scrape data from multiple websites

- Scrape page links

- Scrape meta descriptions

What is website scraping or web scraping?

Simply put, web scraping is a method of extracting website data like Page titles, Headings, Meta descriptions, Internal links, External links, etc.

There are multiple ways to extract data from a website page –

- Using web crawlers

- Using some software

- Through coding

- Using Google Sheets

Using Google Sheets to scrape website data is the most common one and also the easiest one because it requires no coding knowledge.

I’ll show you how to do it in the later part of this article, but first, let’s understand why one might want to scrape website data.

Why scrape websites using Google Sheets

There are several and disparate reasons to scrape website data. A few is mentioned below.

- To get the list of all websites on a specific topic. For example, you want to make a list of all e-commerce websites in India.

- To find contact details like email addresses, and phone numbers from multiple websites.

- To monitor your competitor’s websites and track their progress.

- To get data for market research or lead generation.

These are only a few examples, there can be many other reasons as well to scrape website data. Now, the next question is, What kind of data can I scrape using Google Sheets?

Let’s find out!

What kind of data can I scrap by using Google Sheets?

The answer is- Any type of data.

You can scrape anything available on a webpage using Google Sheets. This includes:

- Text

- Images

- PDFs

- URLs

- Headings

- Page titles

- Numbers

- Internal links

- External links

Now let’s understand how to use Google Sheets to scrape website data.

How to scrape websites using Google Sheets?

We’ll scrape the data by using the IMPORTXML function in Google Sheets, as well as a couple of other ways–but this one is versatile enough and will serve our needs.

What is IMPORTXML?

IMPORTXML is a function that can be used to import data from a variety of sources into a Google Sheets spreadsheet. The function can be used to import data from an XML file, HTML file, or text file.

IMPORTXML is also helpful for importing data from websites that are not well-structured, such as HTML tables. Because IMPORTXML is a Google Sheets function, it is easy to use and does not require any programming skills.

With the IMPORTXML function, you can extract the data from many structured website data types such as XML, HTML, CSV/TSV, and RSS/ATOM XML feeds.

How to scrape page titles using Google sheets?

Let us learn how to scrape page titles using the IMPORTXML function in Google Sheets. We’ll scrape page title of the Amigo homepage, which is https://trymigo.com.

The formula will be:

=IMPORTXML("https://tryamigo.com/","//title") This will the page title, which is: Amigo | No code data integrations and real-time metrics.

Let us now learn step by step how to scrape websites using Google Sheets.

Step-1: Create columns for the data to import

Create a new spreadsheet in Google Sheets and create two columns – one for the website name and another for the page title.

Step 2: Copy and paste the URL

Once you’ve created the columns and entered the page title, enter the IMPORTXML formula in the cell you want into which you want to pull the data. In this case, B3.

You can see all three syntaxes in the bracket in the red box- URL, xpath_query, locale You can see the first argument is the URL

Now, go to the website you want to extract the data from. Copy the URL and paste it into the formula with double quotation marks.

The syntax will now be as shown below:

=IMPORTXML(“https://tryamigo.com/”,

Step-3: Enter XPATH_QUERY

After putting the URL, you have to enter the xpath_query (the kind of data you want to fetch).

As we have to fetch the title of the page, we’ll enter “//title” as xpath_query and close the bracket.

(Don’t forget to close each syntax with quotation marks)

Now it will look like this.

Now hit the ENTER, and the magic happens. After loading for a couple of seconds, it will show you the Page title– Amigo | No code data integrations and real-time metrics

How to Scrape data from multiple websites

Extracting data for one website is fairly easy. But what if you have to do it for 10 websites? Can you put the formula in every cell and extract the data? That’d be quite tedious.

I’m going to show you, how you can do it for multiple websites by putting the formula only once and just dragging the cursor down for all of them.

So, I’m going to do it for 5 websites. I’ll select 5 random websites and will fetch the Page titles for all 5 websites.

As I have created 2 columns already in the above example, I’ll create one more column for the URL and put all 5 URLs in that column, as shown below.

As you can see, I have put 5 URLs from different websites. Now, I’ll go to cell C3, where I applied the formula originally and will edit it.

Instead of putting the complete URL, select one cell, where the URL is placed. In our case, I’m going to select B3 (where I have stored Amigo’s URL)

See the picture below.

After you have edited the formula, just hit ENTER. Then drag the cursor down do the last cell where you want to apply the formula.

After you drag it down, all the page titles of 5 websites will appear.

See the picture below

You can do this for any number of websites.

Now, even if you change the URL of a particular website, it will automatically update and will give you the page title of the new URL.

Let me show you how.

I’ll just change the URL of Udemy and will replace it with Virat Kohli’s Wikipedia page

You can see that the page title has been automatically updated.

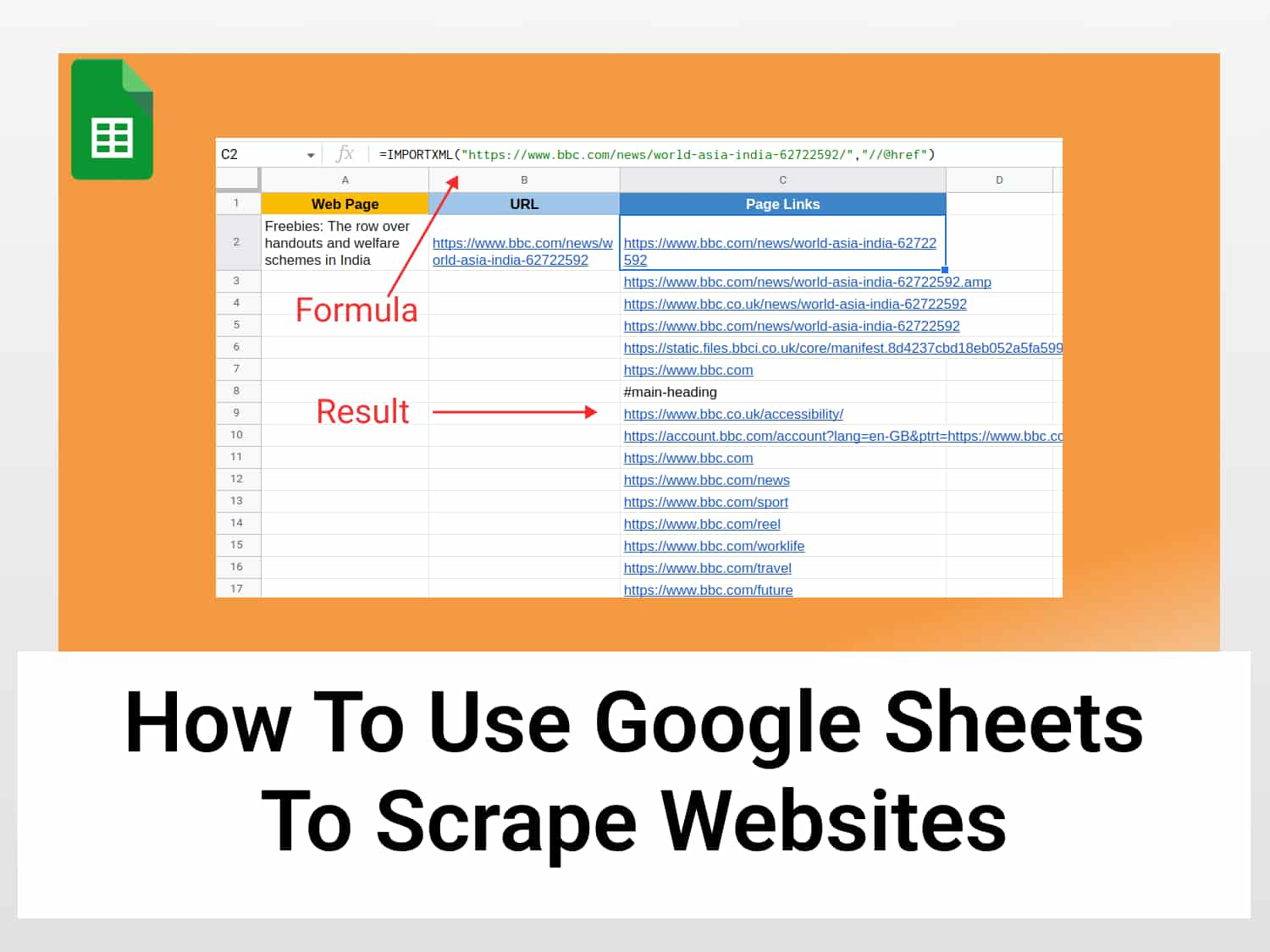

How to scrape all the page links of a web page using Google Sheets

We can scrape all page links of any website or webpage by using Google Sheets using the IMPORTXML function.

Let us try with an example. This time an article from bbc.com.

To scrape all the links of a webpage, only xpath_query will need to be changed. The xpath_query to scrape all the links is “//@href”.

So, the syntax will be as follows:

=IMPORTXML(“https://www.bbc.com/news/world-asia-india-62722592”, “//@href”) Press Enter and all the links from that page will be returned in the cells underneath the formula, as shown below.

To get the links for multiple web pages, just follow the same procedure as explained above on how to pull page titles from multiple websites.

How to scrape meta descriptions using Google sheets

We can also use the IMPORTXML function to scrape meta descriptions. This time we’ll use this New York Times article as our example.

The second parameter, the xpath_query, will have to be changed to the query parameter for the meta description, which is “//meta[@name=’description’]/@content”

So, the syntax will be as follows:

=IMPORTXML(“https://www.nytimes.com/2022/08/12/sports/soccer/bayern-munich-bundesliga.html”,”//meta[@name=‘description’]/@content”)

Press Enter, and the meta description will appear is a moment.

Again, you can follow the same procedure as earlier if you want to pull the meta description from multiple webpages. That’s all for now. I hope you have learnt, how to scrape website data using Google Sheets. It’s super easy. You just have to follow all the steps and you will be able to do it.

Click here to get the Google sheet for the above examples

Conclusion

There are different ways to scrape websites using Google Sheets. This article covers just one of them and hope scraping website by using google sheets is now easy for you. It should give you a taste of it.

Now that you know how to extract data from a website, it’s time to put your new skills to the test. Experiment with different websites and see what kind of data you can collect.

And if you ever need help scraping data, don’t hesitate to reach out to us! We would be happy to walk you through the process step-by-step.

See also

There are several other useful things you can do with Google Sheets. You can find them all here.

Some related articles you may find useful:

https://blog.tryamigo.com/sort-query-using-order-by-in-google-sheets/

https://blog.tryamigo.com/how-to-pull-crypto-prices-in-google-sheets/

https://blog.tryamigo.com/how-to-generate-qr-codes-in-google-sheets/